String SimAI

AI Simulation Model for Piano Based on DeepXDE

2026.3

Project Members

Liu Zhaorui

Hu Qianru

Liu Lin

Qiu Yang

MY ROLE

Model Training

Web Development

TOOLS

Python

Flask

DeepXDE

Deepseek

SURPPOTED BY

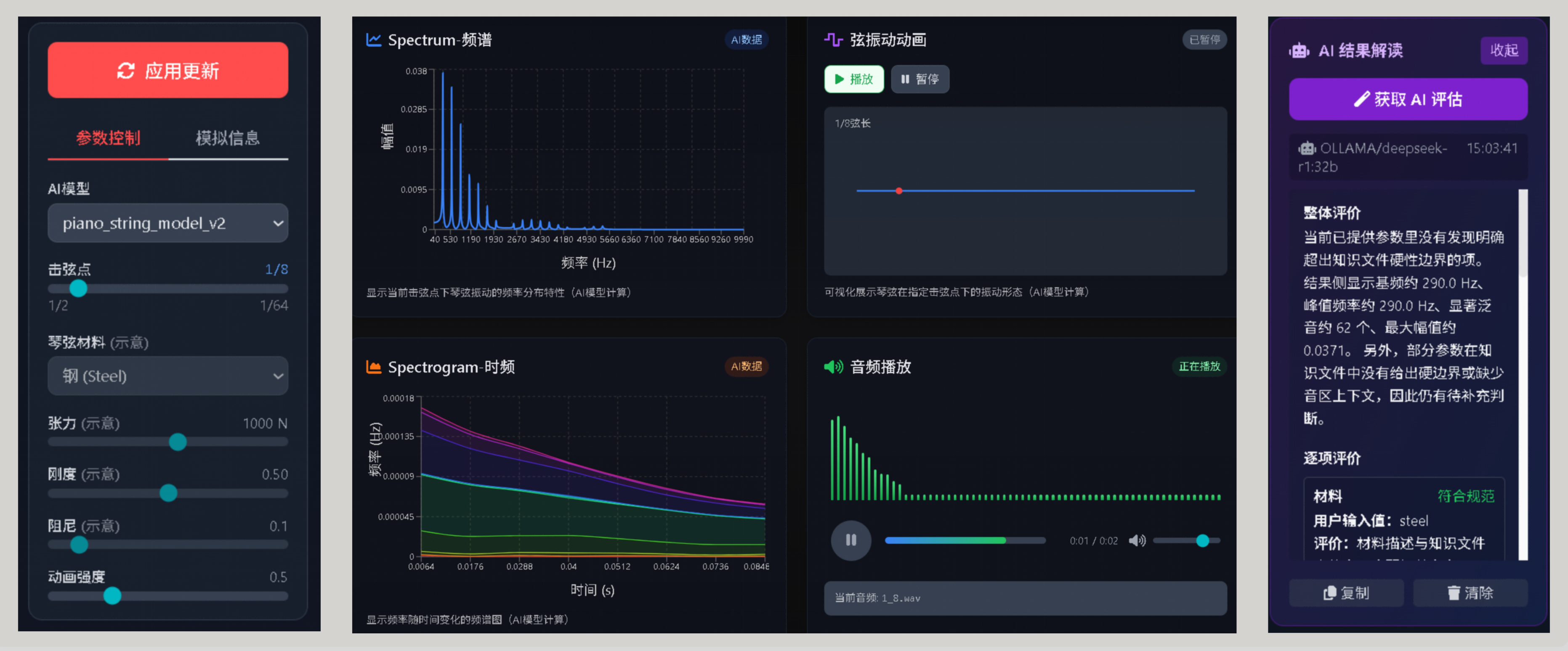

This system is based on the open-source DeepXDE physics machine learning framework and has independently built an AI teaching and auxiliary research tool for piano acoustics and string vibration analysis. It combines the physical modeling, visual analysis, and large language model evaluation of string vibration, and can quickly generate string vibration simulation results based on parameters such as the striking point, and visually display them in the form of frequency spectrum, time-frequency diagram, vibration animation, etc. At the same time, the system can also automatically evaluate the results by combining the piano design parameter knowledge base, helping students understand the relationship between parameter changes and timbre, overtones, and vibration characteristics.

Main functions:

1. Input the parameters related to the strings and generate the results of simulating the vibration of the strings.

2. Display the vibration animation of the strings and visually observe the vibration patterns of the strings in time and space.

3. Display a spectrogram and analyze the distribution of overtones, main peak positions, and frequency structures.

4. Display a time-frequency chart and observe the attenuation and changes of frequency components over time.

5. Call the local LLM to automatically generate analysis conclusions and parameter tuning suggestions.

6. Combining with the RAG knowledge base, enable AI to output results according to preset specifications and evaluation criteria.

The core purpose of training AI models for strings is to approximate complex physical calculation processes in a more efficient way.

Traditional string vibration analysis usually relies on analytical models, numerical simulations, or experimental measurements.

Although these methods are reliable, they have several problems in teaching or interactive scenarios:

1) the calculation process is complex and not intuitive enough.

2) The cost of recalculating after parameter changes is high.

3) It is difficult to achieve real-time interactive display.

4) Students find it difficult to associate formulas, images, and auditory results.

The trained AI model can predict the response of strings faster while retaining physical law features,

achieving near real-time visualization and interactive analysis, which is more suitable for classroom demonstrations and parameter exploration.

LLM is used in this system to provide explanations and evaluations for physical models. Its main functions are:

1. Convert the numerical and graphical results output by the model into natural language explanations.

2. Generate conclusions, evidence, and recommendations based on teaching objectives.

3. Help students understand why the current parameters produce such vibrations and spectral results.

4. Generate standardized evaluation text according to the preset format.

5. Provide language support for classroom, presentation, and training tasks.

Introducing RAG into LLM:

Introducing RAG enables LLM to not only rely on the model's own common sense when generating evaluations,

but also prioritize referencing the professional knowledge files provided,

and then generate results that are more in line with teaching standards and professional norms based on these knowledge contents.

The benefits of doing so are:

1. Output more closely aligned with curriculum standards and research objectives.

2. Can improve professionalism.

3. Enable AI to evaluate according to defined formats and rules.

4. In the future, as long as the knowledge file is updated, AI output can be continuously optimized without the need to retrain the large model.

Results Visualization